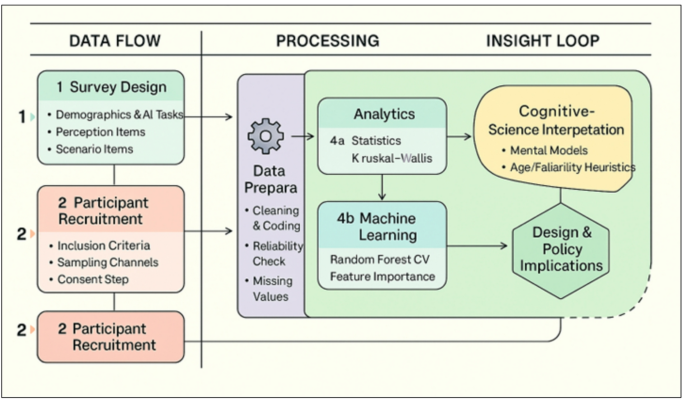

This study used statistical and machine learning analyses to investigate public trust in AI’s cognitive capabilities across various domains. The results offer a comprehensive understanding of how age, gender, and familiarity with AI influence confidence in AI systems.

Influence of demographics on trust

Our results showed that AI familiarity is the strongest predictor of confidence, a finding confirmed by MANOVA and the Random Forest classifier. Participants who reported higher familiarity with AI consistently expressed greater trust across all task types, especially in memory recall and logistics tasks, where AI is perceived to excel.

Age also emerged as a significant factor: younger participants showed higher confidence in AI’s decision-making abilities, particularly in simple and memory-based tasks. This trend aligns with previous studies suggesting that younger demographics are more comfortable with digital technologies and automation.

Although gender effects were smaller than those of familiarity and age, they were statistically detectable in multivariate tests. Prior work has linked gender differences to perceived risk, domain familiarity, and socialization surrounding automation. Future studies should investigate whether design affordances (e.g., error transparency, controllability) mitigate these gaps and whether their effects persist after establishing measurement invariance across gender.

Trust by scenario type

Participants were more inclined to trust humans in high-stakes contexts such as medical diagnosis and self-driving decisions, echoing existing literature on algorithm aversion and the perceived need for human empathy, accountability, and nuance in critical scenarios.

In contrast, trust in AI exceeded human trust in domains requiring data recall and consistency, such as historical knowledge and memory retrieval. These results confirm the hypothesis that people trust AI more in tasks perceived as objective, factual, and repeatable.

Predictive modeling insights

The Random Forest model demonstrated strong accuracy in classifying trust levels and identified AI familiarity, usage frequency, and age as top predictors. This supports the idea that trust in AI is shaped not only by static traits (e.g., demographics) but also by exposure and behavioral engagement.

Implications

First, onboarding builds familiarity (guided tours, practice trials with feedback) and is likely to raise appropriate trust, especially among older or less experienced users. Second, in high-stakes contexts, human-in-the-loop workflows and explicit error bounds can align trust with risk. Third, task-aware explainability tailored to memory vs. complex judgment tasks should emphasize provenance and uncertainty articulation. Finally, deployers should monitor calibration (both user and model) and publish post-deployment performance dashboards to sustain the warranted trust.

Limitations

The cross-sectional, convenience sample limits causal inference and generalizability. Measurement invariance (e.g., multi-group CFA or DIF) was not tested; thus, some between-group differences may partially reflect scale non-equivalence rather than true trust differences. Our predictive modeling uses survey features and may not capture context-dependent shifts in trust under varying error costs or observed AI performance. Although we used nested cross-validation and calibration analyses, external validation of new populations and roles (e.g., clinicians, drivers, regulators) remains necessary.

We did not incorporate actual AI performance feedback or error-cost scenarios; future trust calibration studies should model over- vs. under-trust relative to system accuracy and risk.

link